Solution overview

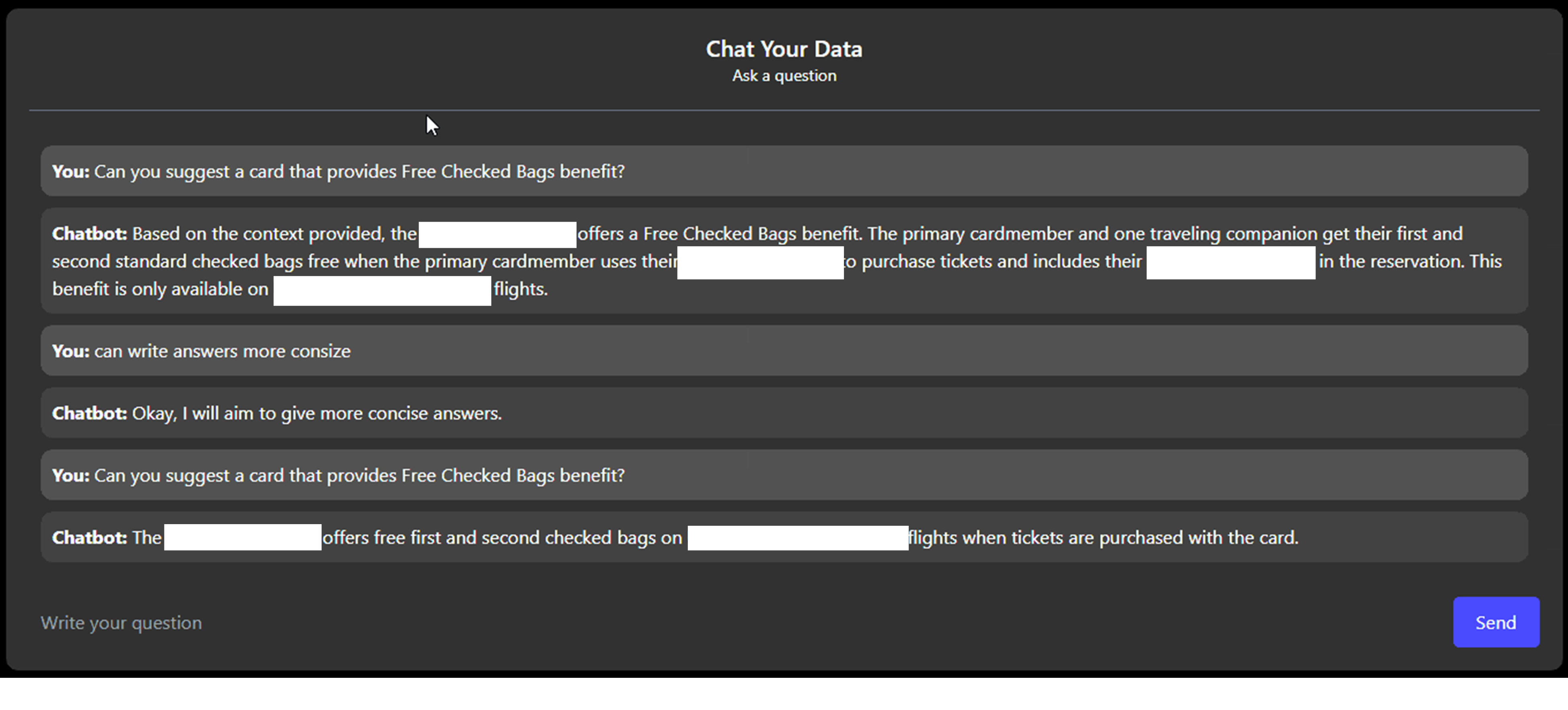

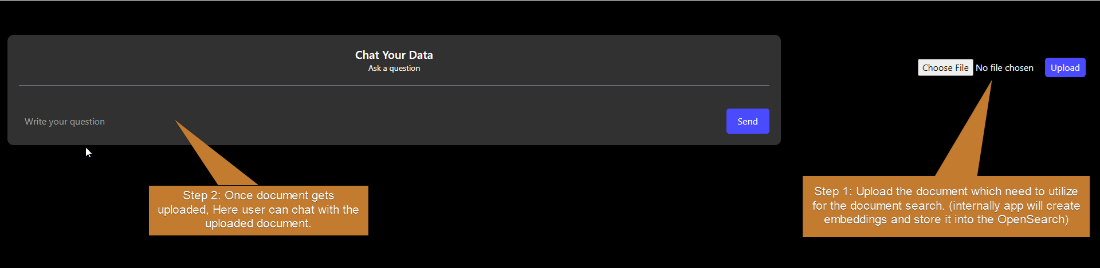

Combining technology and business strategy, the solution facilitates the acquisition and expedites customer growth. The UI-based application features a Q&A screen, allowing customers to ask specific questions about various credit cards, such as benefits like Priority Boarding or Free Checked Bags limits.

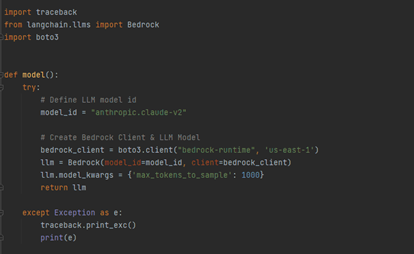

Operationalizing seamlessly, Anthropic’ s Claude LLM model processes a document with diverse co-branded credit card options, benefits, features, and terms.

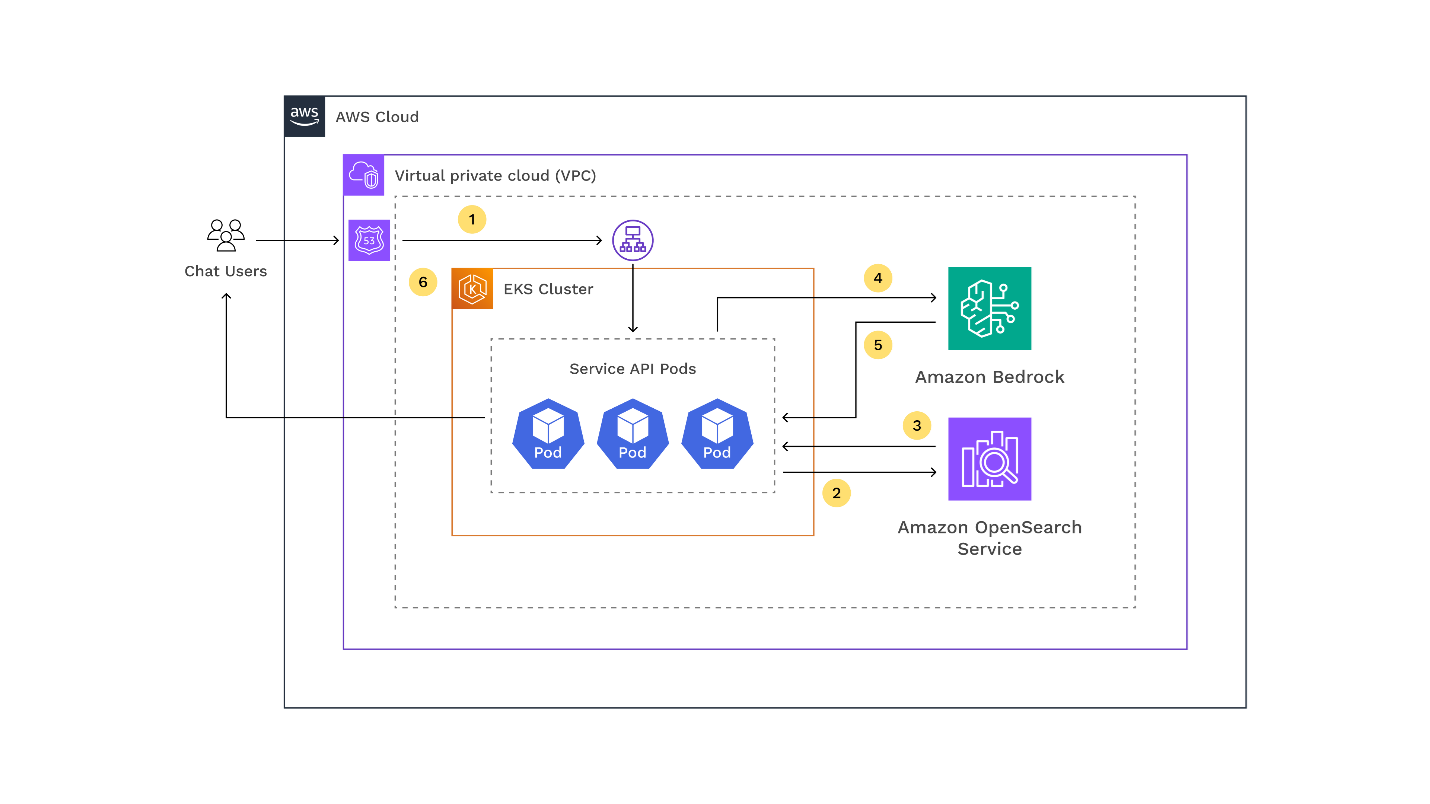

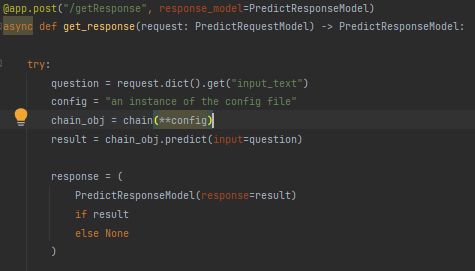

Here is how it works:

1. User requests the GenAI application hosted on the Amazon EKS cluster

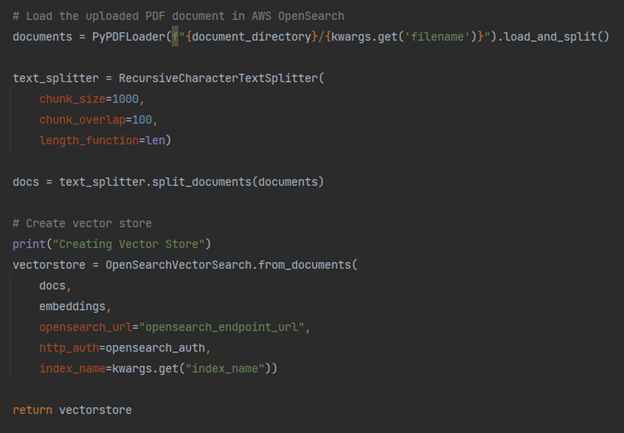

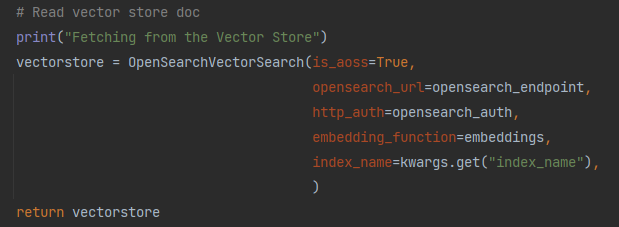

2. An Amazon OpenSearch-powered vector store handling embedded data and vector search scans through the document with diverse co-branded credit card options

3. The index returns search results with excerpts of relevant excerpts from the uploaded document

4. The application sends the question and the data retrieved from the index as context

5. The LLM generates a brief response to the user’s request based on the retrieved data

5. The LLM sends the response back to the user

Based on the response, customers can make informed choices tailored to their needs. Additionally, it functions as both a recommendation and Q&A-based application, allowing users to seek card recommendations based on their preferences. The system offers the flexibility to request concise responses by utilizing simple prompts.

A high-level architecture diagram of the solution is given below:

Technical requirements

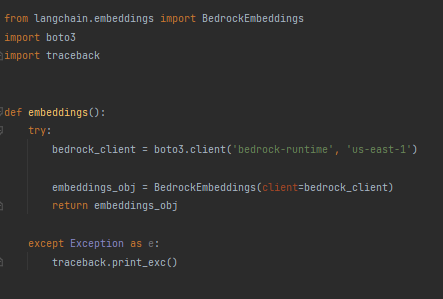

1. Python version: 3.10

2. LangChain

3. boto3

4. botocore

5. FastAPI

AWS Services used

1. Amazon Bedrock

2. Amazon OpenSearch

3. Amazon Elastic Kubernetes Service (Amazon EKS)

4. Amazon Elastic Container Registry (Amazon ECR)

Key features

Some of the key features of the solution are as follows:

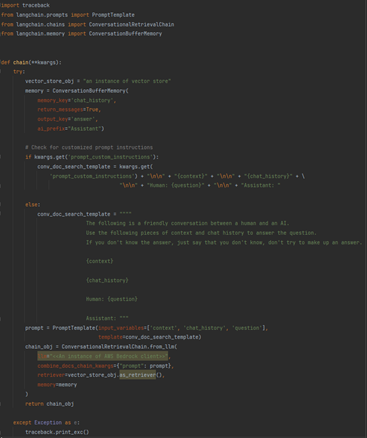

- Implements a Q&A-based approach by utilizing an LLM with Amazon Bedrock support

- Utilizes Amazon OpenSearch for searching, visualizing, and analyzing extensive text and unstructured data

- Facilitates semantic search to locate similar text fragments in the vector space

- Users can select foundational models based on requirements. The application is implemented with Anthropic’ s Claude functional model (FM) supported by Amazon Bedrock

- Streamlines chat history management with the LangChain framework